Full transcript

Note: We encourage you to listen to the audio if you are able, as it includes emotion not captured by the transcript. Please check the corresponding audio before using any quotes.

[PERCUSSIVE SYNTHESIZER MUSIC]

NELUFAR HEDAYAT, HOST:

This is Course Correction from Doha Debates. I’m Nelufar Hedayat. Each episode, we’ll look at one big global issue…and meet the people who are actively working to fix it.

We’re not just a podcast — we make a bunch of wondrous digital films and create stuff for our socials. We also put on a series of big live debates, with speakers who have inspiring ideas about how to change the world.

The goal of our live debate series is to build bridges between experts with different perspectives, not pit them against one other. We are looking for what connects us, rather than separates us. And we want to focus on the solutions.

In a recent live debate in our hometown Doha, we discussed artificial intelligence — its promises and its problems. AI might seem like sci-fi fantasy, but forms of artificial intelligence are already changing our everyday lives, from Google Translate, Siri and Alexa to mass surveillance. But we’re only seeing the beginning of the artificial intelligence boom.

[FUTURISTIC XYLOPHONE MUSIC]

NELUFAR: In the future, what if we can teach machines to make judgments? To make perceptions in a way that can outpace the human mind? Machine learning has the potential to alter the course of human existence. AI would make it possible to fight wars without soldiers, or find any human on Earth in real-time. But it could also deepen racial discrimination and put lives at risk.

At the debate, each of our speakers presented their views on the risks and benefits of artificial intelligence.

[ARCHIVAL AUDIO]

MAN WITH SWEDISH ACCENT:

AI is not just one more cool technological advance, not just one more interesting gadget.

WOMAN WITH KENYAN ACCENT:

AI is and will continue to provide us the opportunity to rewrite our future. And from where I stand, this is a future that is filled with a strong African voice.

MAN WITH BRITISH ACCENT:

Here’s something that actually does concern me a lot more than those potential Russian robots: And that’s the fact that most politicians are generally quite bad at making decisions about technology.

WOMAN WITH AMERICAN ACCENT:

AI can compound the very social inequalities its champions hope to overcome. AI magnifies the flaws of its makers — us.

NELUFAR: That last speaker is Joy Buolamwini, a computer scientist and digital activist at the MIT Media Lab. She’s the founder of the Algorithmic Justice League, which fights racial and gender bias in artificial intelligence.

Joy is also an artist. She describes herself as a poet of code, who tells stories that make “daughters of diasporas dream and sons of privilege pause.”

I sat down with her to talk more about what it means that facial recognition technology doesn’t always work on black faces, and what the consequences of that are.

NELUFAR: “Buolamwini,” is that right?

JOY BUOLAMWINI:

So I always say “bowl of lamb weenies.” So bowl, lamb, weenie.

NELUFAR: Buolamwini. Perfect. There we go.

So how do you, as a young black girl, become interested in this incredibly male, possibly white space?

JOY: Yeah. So for me — well, I’m 29 now, but I guess I’m still a young black girl, which is cool.

NELUFAR: Younger than me!

[LAUGHTER]

JOY: So I’m the daughter of an artist and a scientist and I, when I was little, my dad would take me to his lab. I would feed cancer cells, he would be running some sort of analytics on his computers. He had these huge silicon graphics computers that would render protein structures. So when I was little, I would go with my dad, and he’s working on computer-aided drug design. Right? So like — that’s just how I grew up. And we had, we had a computer lab in my house. I didn’t know this wasn’t normal. Just —

NELUFAR: OK! It was your normal.

JB: My normal was being surrounded by computers and being in an environment that encouraged me to be curious and to explore. I would break apart computers, set up networks, and it was just like, “Have fun!” It never felt foreign to me, or something that I couldn’t do.

NELUFAR: I just want to ask you very quick, basic questions: What is artificial intelligence?

JOY: So artificial intelligence, broadly speaking, is trying to see if we can have machines make judgments the way humans might make judgments, perceive the world. So you can think of computer vision or you can think of voice recognition. And also how we might navigate the world. So think of self-driving cars. There have been many different approaches to artificial intelligence. And the one that’s working very well right now is something called supervised learning. So you’re teaching the AI to learn patterns. So for example, if you want to teach an AI to recognize a face, instead of, in the past, trying to code every single thing that might make up a face too complicated, what you do instead is say, “Here is an example of a data set of what faces look like. And also here’s some examples of things that aren’t faces. Just so you know, right? Faces, not a face. Faces, not a face.” So eventually you can create a system that can figure out the pattern of a face. The problem can come in, is if all of that training data — let’s say you only ever saw white faces.

So at the point I was really frustrated, I was working on this project. I thought I had the facial recognition code right, or the face detection code. But it wouldn’t get my face. So the first thing I actually did was I drew up a face on my hand, and it detected the face on my hand. So I was like, wait, now if it can detect the face on my hand, what else do I have in my office? So I had a white mask in my office, ’cause it was close to Halloween, and we had a party. And so I put it, I put the white mask — and I was just, I was first surprised ’cause I was like there’s no way. Right? OK, the hand, but the white mask? So literally I was just, I was bemused. And then I was like wow, Fanon already said it: “Black skin, white mask.” I just started thinking of all of these other references of what it means to change yourself to fit a system or society that wasn’t designed for you.

NELUFAR: Do you not just feel subhuman at that moment? Like this, this thing that — this world that you are helping to create does not accept you for who you are?

JOY: What I realized was, these assumptions that we were making about the progress of AI weren’t true. So for me, it was saying, OK, I’m reading all of these articles that says we’ve arrived in the age of automation, and my own experience is showing me that there are serious shortcomings. So it wasn’t that I felt less than, I felt the AI was less than.

NELUFAR: You mentioned a lot “the coded gaze” in your work. Can you tell me what the coded gaze is and how it affects artificial intelligence?

JOY: Sure, so some people might be familiar with terms like the male gaze or the white gaze, which is essentially saying the identity you have, your position in the world, influences what you perceive and how you perceive it, including what’s important. So when I talk about the coded gaze, I’m talking really about power. And the coded gaze also reflects our prejudices. So that’s why I came up with the term, because I was realizing, just like you have the male gaze or the white gaze, which might manifest itself in the kind of media that we’re seeing, the kind of news stories we hear, this thing is also going on in the technology we’re building.

NELUFAR: It’s a very useful term. If the coded gaze is predominantly white, predominantly male, what is causing this? What is the, what is the cause of this very, very restricted gaze? Is it the data sets that we’re feeding into the algorithms? Is it the people that are translating or making sense of the data, or is it the software itself? Who is being malicious here?

JOY: Well, the interesting thing is no one has to be intentionally malicious for the coded gaze to manifest. The coded gaze is a reflection of who holds power. So we were talking earlier about facial recognition, it can be biased and so forth. So my question was, well, we have all these, you know, smart people working on this tech. How did we get to this point? And so I started doing the research, and I looked at some of the key data sets that were being used by other researchers, and even a key data set that came from the National Institute for Standards and Technology. This is supposed to be the gold standard data set. So I looked at that gold standard data set. And then I found out it was 75 percent male and 80 percent lighter skin. And if you looked for women of color — less than 5 percent. The way they collected it is they thought, “Let’s collect public figures.” So now if you look at who holds power in the world, and you’re saying, let’s do a convenience data collection, and you’re doing public figures, you’re going to reflect the patriarchy. So in some ways you can say society is to blame, but you can also say specifically those people who were developing the data sets, not being explicit in checking to make sure what they were creating was actually representative of the world, was a problem.

NELUFAR: So these engineers, these coders, these large organizations and tech companies, by having a laissez-faire attitude, by saying, “Well, we’re just collecting the information that’s out there to create these data sets.” You have a problem with that inherently?

JOY: Oh, absolutely. Because the problem with convenience sampling is, again, if you’re not intentional about being inclusive, what you will do is reproduce existing inequalities. And that’s what’s happening. So tech companies have a responsibility to say, “We can’t just say we’re going to do convenience sampling,” right? “We have to be more rigorous.”

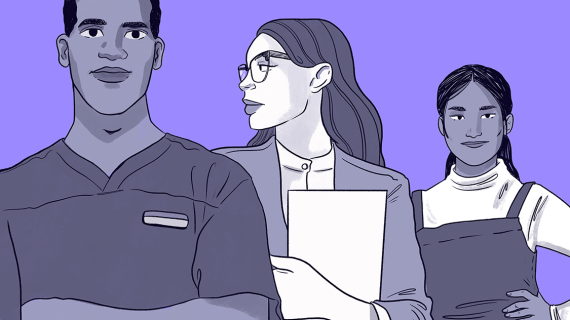

NELUFAR: What does the coding community look like?

JOY: Kind of like the white mask, really. You know, not particularly diverse at the moment.

In most large tech companies, less than 20 percent of the technical workers are women. And if you look at people of color, you’re closer to more of the 2 percent range, right? So if who’s designing these systems isn’t that diverse, we can run into some issues. Then there’s also the actual development process. How are these systems being built? What data’s being used to train this?

NELUFAR: You, you talk a lot about transparency when it comes to the AI community at large. What is transparency like in the community and what are you hoping to see more of?

JOY: So there needs to be transparency during the design, development and deployment of AI systems. So when we’re thinking about design, we have to think about who’s designing in the first place. Like many other computer scientists, we kind of use off-the-shelf parts — code libraries, right?

NELUFAR: Right.

JOY: So instead of having to build everything from scratch, I’m like, look, let me grab this part, let me grab that part. But if we don’t have transparency in how the system was trained or what the limitations are, you’re going to use it hoping or assuming it works well for everybody, but that might not be the case.

NELUFAR: Who should be responsible of making sure that that is reflected — you and I, as people of color, as women — that we should be reflected more accurately in the technology that’s designed for us to use?

JOY: Well, it’s an interesting conversation about the governance of AI, because even if you make these tech systems more inclusive, it can be used for surveillance. So now you have a situation where I have highly accurate facial recognition. Now let’s put that highly accurate facial recognition on a drone — with a camera and a gun. So now this conversation is more than, “Was I included in the data set?” Maybe you don’t want to be in that particular data set.

NELUFAR: That’s so interesting! I’d never thought of it in that way. The more the artificial intelligence and data can recognize you —

JOY: Yeah.

NELUFAR: You are now involved.

JOY: You are. And so I call this a cost of inclusion, but there are also costs of exclusion. There is a recent study that came out from researchers at Georgia Institute of Technology, and they were looking at pedestrian-tracking systems, trying to see if I can see a person on the street, because if you have a self-driving car, you don’t want people to get hit. So this is what they found out: They tested these systems and found they were less accurate for people with darker skin as compared to lighter skin. So now this is a cost of exclusion, right? If I’m not included and you have autonomous vehicles all over the place, that’s also going to be a problem. So you have cost of inclusion: I end up in mass surveillance. Cost of exclusion: Maybe I get hit.

NELUFAR: How do we eradicate these anomalies, and these exclusion and inclusion costs, when it comes to artificial intelligence?

JOY: Absolutely. I think the first thing we have to keep in mind is, people need to have a choice in whether or not AI is being used. Affirmative consent is not a current pattern in the way we develop these systems. It’s just assumed that hey, you walk in the street, you might get hit, right? Or if I log into a particular service, they’re going to harvest my data, and this is what it takes to be part of the connected digital world. But that’s actually a design choice. It doesn’t have to be the case that just by entering a place I can automatically take your face information. So I think that’s the first thing — making sure people actually have a choice.

The other thing is meaningful transparency. If I’m going to a job interview that’s using facial analysis technology to infer my emotional engagement or my problem-solving abilities — and there’s a company, HireVue, that claims to be able to do this —

NELUFAR: Wow.

JOY: — I need to know. We’ve gotten bias in the wild, reports about companies like this, where somebody will say, “It’s only after the fact I found out they were even using this kind of technology in the first place.” So if you don’t have meaningful transparency, you can have no due process. If something goes wrong, how can you contest, right? So affirmative consent, meaningful transparency and then also continuous oversight.

In the UK, they started piloting facial recognition technology, but made the requirement that the police had to report the performance metrics. When they reported the performance metrics —

NELUFAR: Oh, goodness.

JOY: Ninety percent — over 90 percent — false positive match rates. This was May 2018. More than 2,400 innocent people being mismatched with criminal suspects. So even if these technologies went through certain checks or you’re saying, “Yes, the police is using this tech, right? There’s transparency there.” You still have to check how it’s impacting society. It’s something continuous, this is something you have to keep an eye on.

NELUFAR: Online, some of the critics say that actually you put a social justice spin on data sets and technology that has no bias inherently. What do you say to people who charge you with this?

JOY: Well, I say check the data set. So here’s a gold standard data set that’s meant to be reflective of the world. It’s 75 percent male, it’s 80 percent lighter skin. So I don’t — like, the skew in the data set is empirical.

NELUFAR: But the point being, that this is a human fault, not the fault of artificial intelligence. And by putting this kind of spin, they say, onto it, what you’re doing is, you’re turning, you’re making AI, you’re vilifying artificial intelligence or coding or these algorithms —

JOY: I hear what you’re saying. AI is all ultimately a reflection of humanity. So all of these critiques are about the people who are creating the technology. In fact, this is a point I try to make, because some people will be like, “Oh yeah, the machine is biased but we’re not so” — like where do you think the machine is getting the bias from? As far as the social justice spin, I think there is no greater purpose for me in doing the work than making sure that the gains made with the civil rights movement or the women’s movement or the labor movements are not undermined by machines because we assume they’re neutral. So yes, you can call it social justice, etc. We have the empirical, peer-reviewed research, you know, so regardless of my own leanings, what we’re showing is true. AI systems can produce sexist results, they can produce racist results. There are also issues when it comes to age. So if you’re somebody who’s going to age in this world or encounter AI, we all have to be vigilant.

NELUFAR: You did a beautiful spoken-word piece in which you, you kind of tied in your artistic side and your very artificial intelligent machine-learning coding side. And it was about facial classification software that wasn’t able to properly classify first lady Michelle Obama as a woman and wasn’t able to identify the first black congresswoman, Shirley Chisholm, as a woman, either.

JOY: Yeah.

NELUFAR: I mean, it was ridiculous. And you talk about it in the piece.

JOY: Even Serena Williams was being labeled “male.”

NELUFAR: But not to be, but it was other things — like for example, it couldn’t recognize an Afro and thought it was a toupee.

JOY: Right. Or a coonskin cap.

NELUFAR: Wow. I don’t even — or, or, or it wouldn’t recognize — oh, let’s say that – these women had walrus mustaches.

JOY: That was epic.

NELUFAR: That was astonishing to me!

JOY: I was just like, wow.

NELUFAR: I mean —

JOY: IBM.

NELUFAR: Is that motivation for you to continue to do what you do? I want to understand your drive and your passion, ’cause it’s palpable in every word you say. Like where do you get this drive?

JOY: Well, for me, it’s understanding that it’s not just about facial analysis technology. AI is trying to decide if you get a job, right? AI is deciding if you have access to medical treatments. And if we get that wrong, it’s more than just saying, “Oh, your Afro looks like a toupee.” You have real-world consequences. And that’s what drives me, knowing that so many of the advances people before me have fought for, in terms of equality. All of that can be erased because we introduce AI that’s biased, and we’re not checking. And so then it seems like, “Oh. well these decisions are objective because they came from a machine.” And while we would like to believe that, because we’re using machine learning that’s learning from data that we have created that reflect structural inequalities, it’s not going to always be fair if we’re not intentional. So that’s what drives me — because real lives are at stake.

NELUFAR: Wonderful. Thank you so much.

JOY: Thank you.

NELUFAR: Before we go, I asked Joy to recite a bit of my favorite of her poems. It’s called “AI, Ain’t I A Woman?”

JOY: My heart smiles as I bask in their legacies

Knowing their lives have altered many destinies

In her eyes, I see my mother’s poise

In her face, I glimpse my auntie’s grace

Can machines ever see my queens as I view them?

Can machines ever see our grandmothers as we knew them?

NELUFAR: That is all for the show today. To watch the full live debate, go to YouTube and search for “Doha Debates artificial intelligence.” You can see all of the speakers’ proposed solutions, and tell us what you think. How are you affected by bias in artificial intelligence? And what would you do to fix it? I want to hear from you. Tweet us at @DohaDebates. Or get in touch with me at @nelufar.h.

Course Correction is written and hosted by me, Nelufar Hedayat. The show is produced by Doha Debates and Transmitter Media. Doha Debates is a production of Qatar Foundation. Special thanks to our team at Doha Debates — Japhet Weeks, Amjad Atallah and Jigar Mehta. This episode was mixed by Dara Hirsch. If you like what you hear, rate and review the show. It helps other people find us. Join us for the next episode of Course Correction wherever you get your podcasts.

Our other podcasts

Doha Debates Podcast

Doha Debates Podcast brings together people with starkly different opinions for an in-depth, human conversation that tries to find common ground.

Lana

A podcast in Arabic for today’s generation to discuss their outlook on the world, hosted by Rawaa Augé.

Necessary Tomorrows

Mixing speculative fiction and documentary, leading sci-fi authors bring us three futures that seem like fantasy. We meet the writers who dream of these futures and the activists, scientists and thinkers who are turning fiction into fact.